OS X (in particular Yosemite) is not known for it's reliable wifi connections. There are a number of solutions posted over the internet (see below) which I have not tried. However, if you, like me, have wifi issues after installing a recent os x update this might be a solution. The problem is that the update renames (backups) the discoveryd.plist file without installing a new one. Simple workaround (maintaing the backup and renaming the _bc to original):

- sudo mv com.apple.discoveryd_bc.plist com.apple.discoveryd.plist

- sudo reboot

Here are some mroe fixes from OS X Daily (have not tried / needed those myself).

Original article can be found here : http://osxdaily.com/2014/10/25/fix-wi-fi-problems-os-x-yosemite/

1: Remove Network Configuration & Preference Files

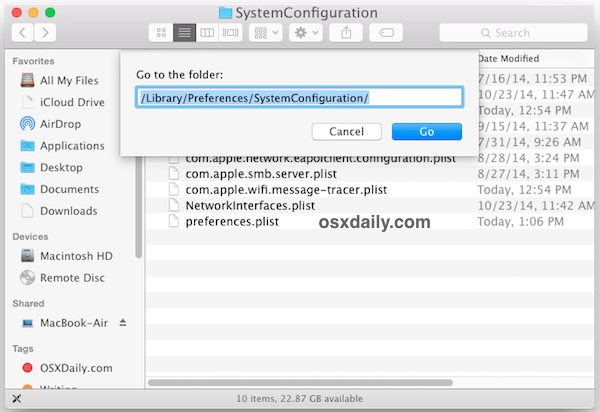

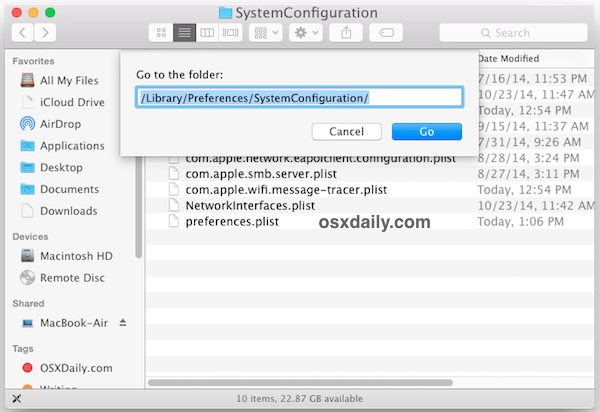

Manually trashing the network plist files should be your first line of troubleshooting. This is one of those tricks that consistently resolves

even the most stubborn wireless problems on Macs of nearly any OS X version. This is particularly effective for Macs who updated to Yosemite that may have a corrupt or dysfunctional preference file mucking things up:

- Turn Off Wi-Fi from the Wireless menu item

- From the OS X Finder, hit Command+Shift+G and enter the following path:

/Library/Preferences/SystemConfiguration/

- Within this folder locate and select the following files:

com.apple.airport.preferences.plist

com.apple.network.identification.plist

com.apple.wifi.message-tracer.plist

NetworkInterfaces.plist

preferences.plist

- Move all of these files into a folder on your Desktop called ‘wifi backups’ or something similar – we’re backing these up just in case you break something but if you regularly backup your Mac you can just delete the files instead since you could restore from Time Machine if need be

- Reboot the Mac

- Turn ON WI-Fi from the wireless network menu again

This forces OS X to recreate all network configuration files. This alone may resolve your problems, but if you’re continuing to have trouble we recommend following through with the second step which means using some custom network settings.

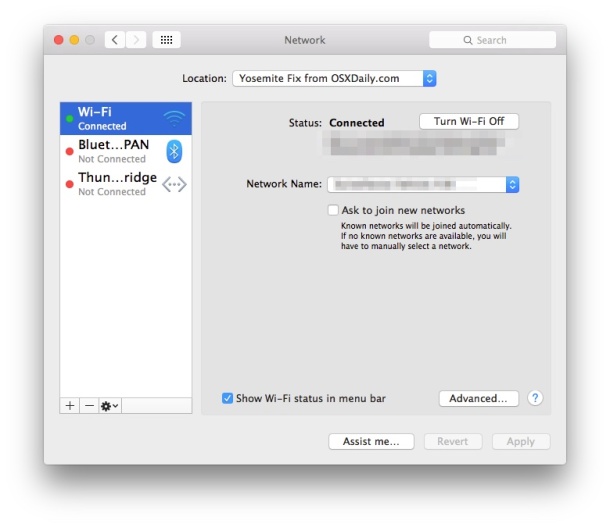

2: Create a New Wi-Fi Network Location with Custom DNS

What we’re doing here is creating a new network location which is going to have a configuration different from the defaults. First, we’ll use a completely new network setup. Then, we’ll set DNS on the computer rather than waiting for OS X to get DNS details from the wi-fi router, which alone can resolve many issues with DNS lookups, since Yosemite seems to be finicky with some routers. Finally, we’re going to set a custom MTU size that is slightly smaller than the default, which will get rejected less often by a router, it’s an old netadmin trick that has long been used to fix network troubles.

- Open the Apple menu and go to System Preferences, then choose “Network”

- Pull down the “Locations” menu and choose “Edit Locations”, then click the [+] plus button, give the new network location a name like “Yosemite WiFi” then click Done

- Next to “Network Name” join your desired wifi network as usual

- Now click the “Advanced” button, and go to the “DNS” tab

- Click the [+] plus button and specify a DNS server – we’re using 8.8.8.8 for Google DNS in this example but you should use the fastest DNS servers you can find for your location, it will vary. You can also use your own ISP DNS servers

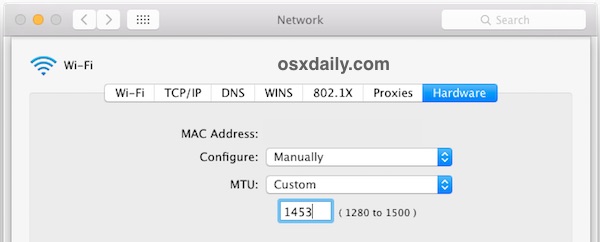

- Now go to the “Hardware” tab and click on ‘Configure’ and choose “Manually”

- Click on MTU and change it to “Custom” and set the MTU number to 1453 (this is a networking secret from ancient times, and yes it still works!), then click on “OK”

- Now click on “Apply” to set your network changes

Quit and relaunch any apps that require network access, like Safari, Chrome, Messages, Mail, and your wireless connectivity should be flawless and back at full speed at this point.

Reset SMC

Some users report that resetting the System Management Controller is sufficient to stir their Wi-Fi back into action. Since many users have a MacBook laptop, that’s what we’ll cover first:

- Turn off the MacBook Air or MacBook Pro

- Connect the power adapter to the Mac as usual

- On the keyboard, press and hold down the Shift+Control+Option keys and the Power button at the same time, hold them all for a few seconds

- Release all keys and the power button at the same time by lifting your hands away from the keyboard

- Boot the Mac as usual

Unload & Reload discoveryd to Fix DNS & Wi-Fi Failures in OS X Yosemite

Another trick that was left in the

comments (thanks Frank!) involves refreshing the discoveryd service by unloading and reloading it with the launchctl command. This is a bit curious but apparently it works for some users, suggesting there could be an issue with discovery or resolving DNS on some Yosemite Macs. It’s certainly worth a try if the above tricks failed to resolve your wi-fi connectivity problems in OS X 10.10, as there are a fair amount of positive reports with this one:

- Open Terminal (found in /Applications/Utilities/ or with Spotlight) and enter the following command:

sudo launchctl unload -w /System/Library/LaunchDaemons/com.apple.discoveryd.plist

- Hit return and enter an admin password to use the sudo command

- Now run the following command to reload discoveryd (this used to be called mDNSResponder)

sudo launchctl load -w /System/Library/LaunchDaemons/com.apple.discoveryd.plist

- Again hit Return to finish the command

You may need to relaunch apps that require network connectivity. Note that if you reboot the Mac with this one, you will have to repeat the above steps to unload and reload discoveryd into launchd.

Bonus OS X Yosemite Wi-Fi Troubleshooting Tricks

Here are some other less than ideal solutions that have been reported to remedy wi-fi issues in OS X Yosemite.

- Join a 2.4GHZ network (N network) – some users report no trouble with 2.4GHz networks

- Set the wi-fi routers 5GHz (G) channel to be somewhere between 50-120

- Turn Off Bluetooth – We have seen several reports that disabling Bluetooth will resolve wifi problems with some networks, but this is obviously not appropriate for Macs that have bluetooth accessories

Have you experienced wireless connectivity issues with OS X Yosemite? What have you tried, and how did you resolve them? Let us know what has been working to remedy your wifi troubles by leaving a comment!